AI tvingar dig att tänka hårdare

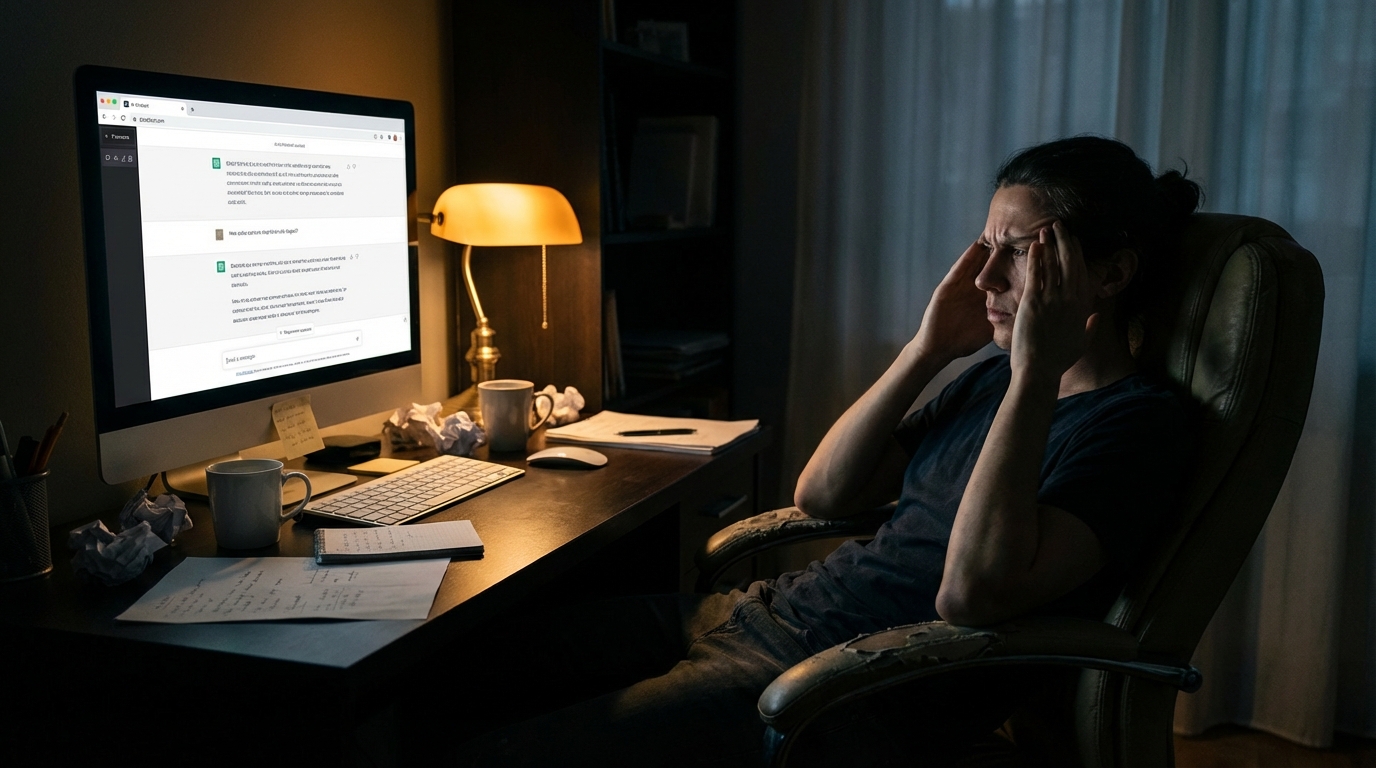

En senior utvecklare hos en kund testade AI-assisterad utveckling i tre dagar.

Han nådde samma output som manuell kodning. Samma funktionalitet, samma kvalitet, ungefär samma tid. Han var inte snabbare. Han var inte långsammare.

Men han sa en sak som stannade kvar: "Jag var tvungen att tänka på ett helt annat sätt."

Löftet som inte stämmer

Alla säljer AI som "tänk mindre, gör mer." Det är det universella löftet. Verktyget tar över de tunga kognitiva lyftena och du kan fokusera på det viktiga.

Det stämmer inte.

Det som händer i praktiken är att du slutar skriva och börjar definiera. Du slutar implementera och börjar validera. Du slutar bygga detaljer och börjar granska helheter.

Ingen av de sakerna är lättare. De är svårare. Men de är svårare på ett annat sätt -- och det skiftet är osynligt tills du faktiskt gör det.

Från byggarmentalitet till granskarmentalitet

I traditionell utveckling tänker du: hur implementerar jag det här? Du bryter ner problemet, skriver lösningen, testar, itererar. Din hjärna jobbar som en byggare.

Med AI tänker du: är det här korrekt? Är det säkert? Är det rätt approach? Gör det vad jag bad om -- eller något subtilt annorlunda? Din hjärna jobbar som en granskare.

Granskarmentaliteten är kognitivt MER krävande. Att bygga är sekventiellt -- du tar ett steg i taget och varje steg har tydlig feedback. Att granska kräver att du håller hela systemet i huvudet samtidigt. Du måste förstå inte bara vad koden gör utan vad den BORDE göra, och sedan jämföra de två.

Det är därför den seniora utvecklaren hos kunden inte blev snabbare. Han bytte kognitivt arbete -- från att producera till att kvalitetssäkra. Och kvalitetssäkring kräver djupare förståelse än produktion.

Det ingen berättar

Ingen som säljer AI-verktyg berättar att du behöver tänka hårdare. Det passar inte narrativet. Du ska tänka MINDRE. Du ska vara FRIARE. Du ska fokusera på det kreativa medan maskinen gör det tråkiga.

Men valideringen ÄR det kreativa. Och valideringen är inte tråkig -- den är krävande. Den kräver att du ifrågasätter varje förslag, förstår varje konsekvens, ser varje bieffekt.

Om du inte tänker hårdare validerar du inte. Om du inte validerar vibe-codar du. Och vibe-coding är inte en teknik -- det är abdikation av professionellt ansvar.

Kvaliteten på tänkandet blir flaskhalsen

Här är insikten som förändrar allt: i en AI-assisterad arbetsprocess är det inte verktygen som avgör kvaliteten. Det är kvaliteten på ditt tänkande.

Två personer med samma AI-verktyg får radikalt olika resultat. Inte för att de promptar olika -- utan för att de tänker olika. Den som förstår domänen, som ser arkitekturella konsekvenser, som vet vad som kan gå fel i produktion -- den personen får användbar output. Den som inte har den kunskapen får output som ser bra ut men som inte håller.

Verktyget förstärker det du redan kan. Det kompenserar inte för det du inte kan. Och att kunna innebär inte att veta fakta -- det innebär att kunna tänka om fakta.

Det kognitiva kravet ÄR ansvar

Det finns en direkt linje från denna insikt till personligt ansvar.

Om AI krävde mindre tänkande kunde man argumentera att vem som helst kan använda den. Att juniorer blir lika bra som seniorer. Att erfarenhet spelar mindre roll.

Men AI kräver MER tänkande -- bara av ett annat slag. Det innebär att erfarenhet blir mer värdefull, inte mindre. Det innebär att den som har byggt system i tjugo år har en fördel som inte kan hoppa över.

Det kognitiva kravet är inte en olycklig bieffekt av AI-assisterad utveckling. Det ÄR ansvar i handling. Att tänka hårdare ÄR att ta ansvar. Det är inte två separata saker -- det är samma sak sedd från olika vinklar.

Vad det betyder i praktiken

Varje dag sitter jag med AI-genererad kod och ställer frågor. Inte till AI:n -- till mig själv. Förstår jag varför den valde den här lösningen? Ser jag vad som händer vid belastning? Vet jag vad som händer om en extern tjänst inte svarar?

Det är tänkande. Hårt tänkande. Det är inte det tänkande jag gjorde för fem år sedan -- då tänkte jag på implementation. Nu tänker jag på validering, konsekvenser, systemeffekter.

Jag tänker inte mindre. Jag tänker annorlunda. Och annorlunda, i det här fallet, betyder hårdare.

AI tvingar dig att tänka hårdare. Det är inte ett problem. Det är poängen.

Se även: Jag är smartare än AI (serie 1) och Mitt jobb är frågorna (serie 13).

Mindtastic om tekniken bakom det -- den sokratiska metoden som AI-arbetsmetod.